Teaching

How to Build a Brain from Scratch

This advanced option course discusses the search for a general theory of learning and inference in biological brains. It draws upon diverse themes in the fields of psychology, neuroscience, machine learning and artificial intelligence research. We begin by posing broad questions. What are brains for, and what does it mean to ask how they “work”? Then, over a series of lectures, we discuss parallel computational approaches in machine learning/AI and psychology/neuroscience, including reinforcement learning, deep learning, and Bayesian methods. We contrast computational and representational approaches to understanding neuroscience data. We ask whether current approaches in machine learning are feasible and scaleable, and which methods – if any – resemble the computations observed in biological brains. We review how high-level cognitive functions – attention, episodic memory, concept formation, reasoning and executive control – are being instantiated in artificial agents, and how their implementation draws upon what we know about the mammalian brain. Finally, we contemplate the outlook for the future, and whether AI will be “solved” in the near future.

Download all lecture slides and notes

- Lecture 1: A brief history of AI

- Introduction; A history of intelligent machines; symbolic AI; the computational approach to mind and brain; deep neural networks; statistical approaches to language modelling and LLMs.

- Watch video of lecture 1

- Watch OLD video of lecture 1

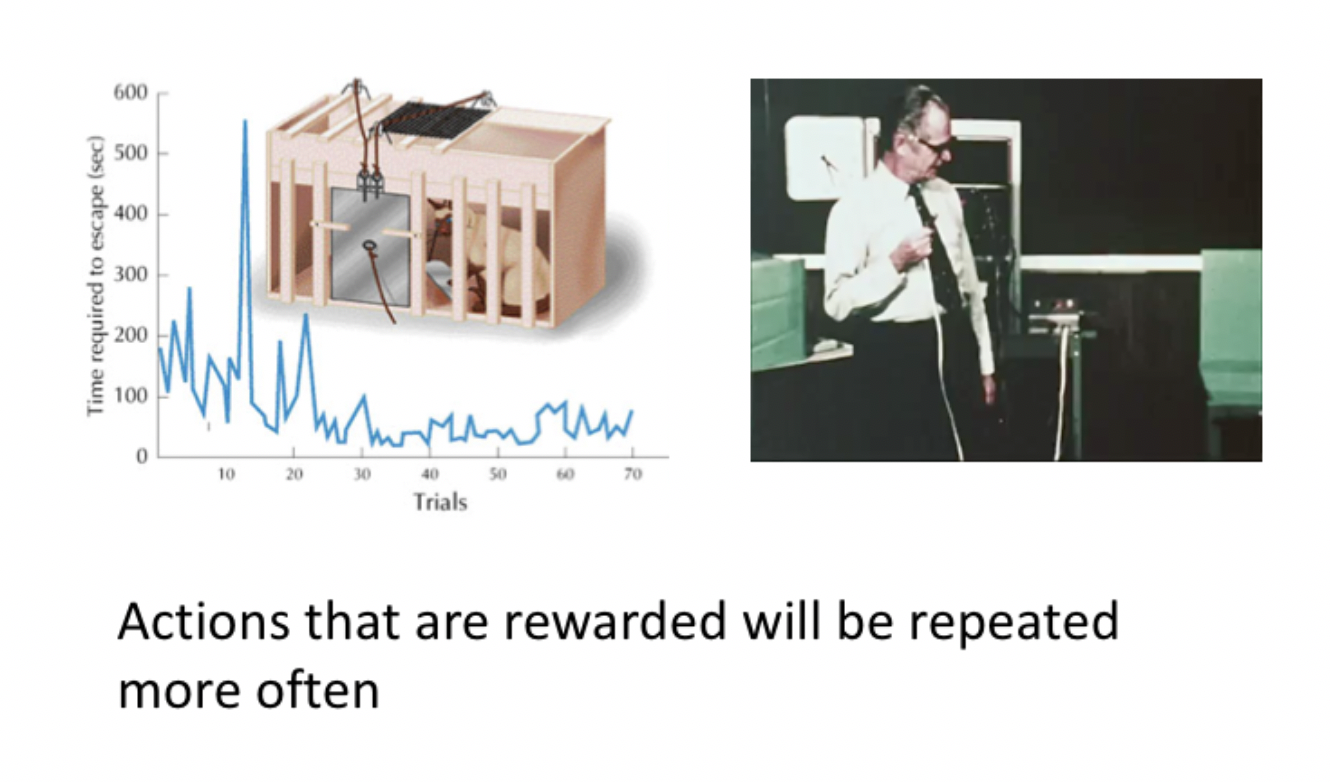

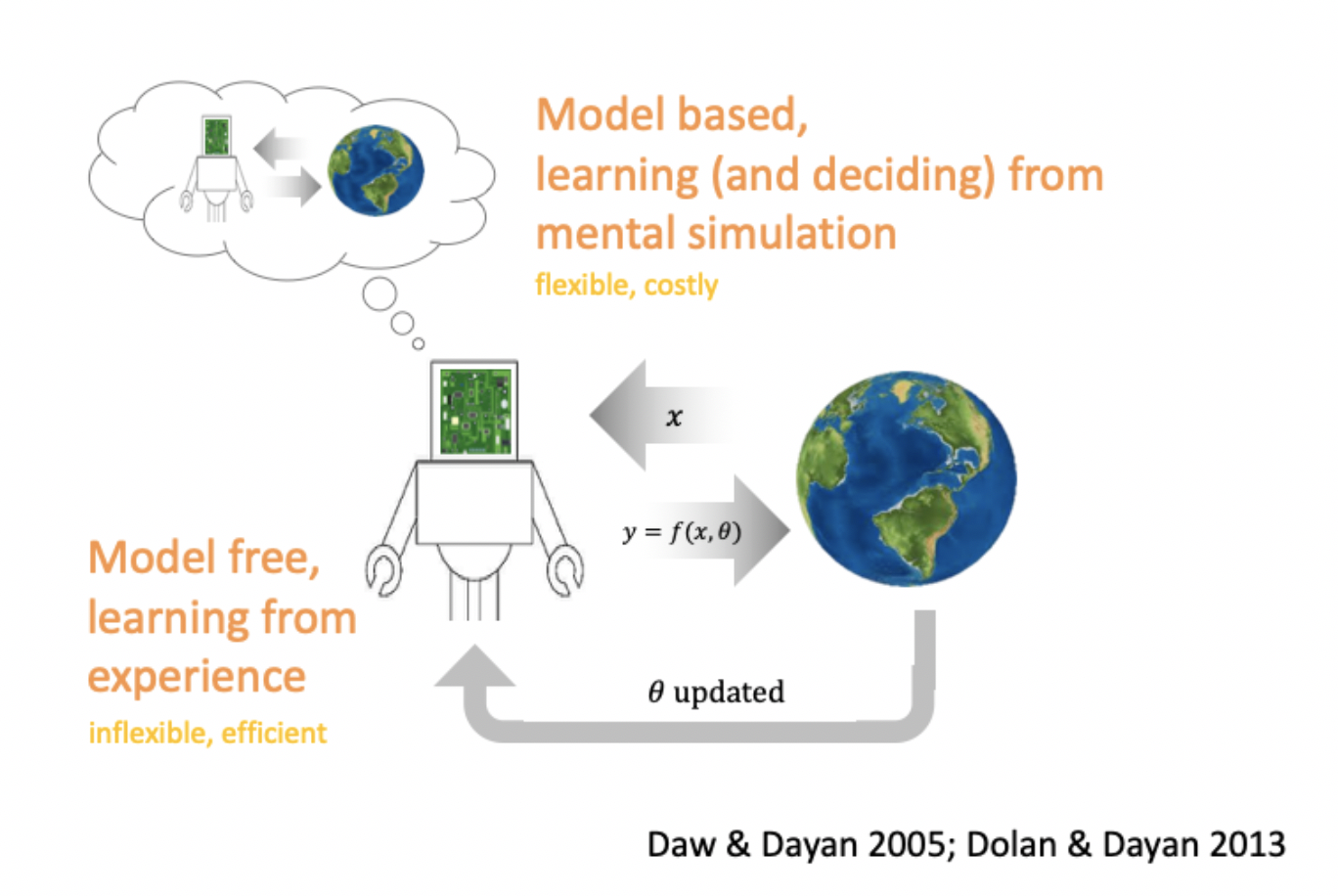

- Lecture 2: Model-free reinforcement learning

- Why do we have a brain; Classical and operant conditioning; reinforcement learning and the Bellman equation; Temporal difference learning; Q-learning, eligibility traces, actor-critic methods

- Watch video of lecture 2

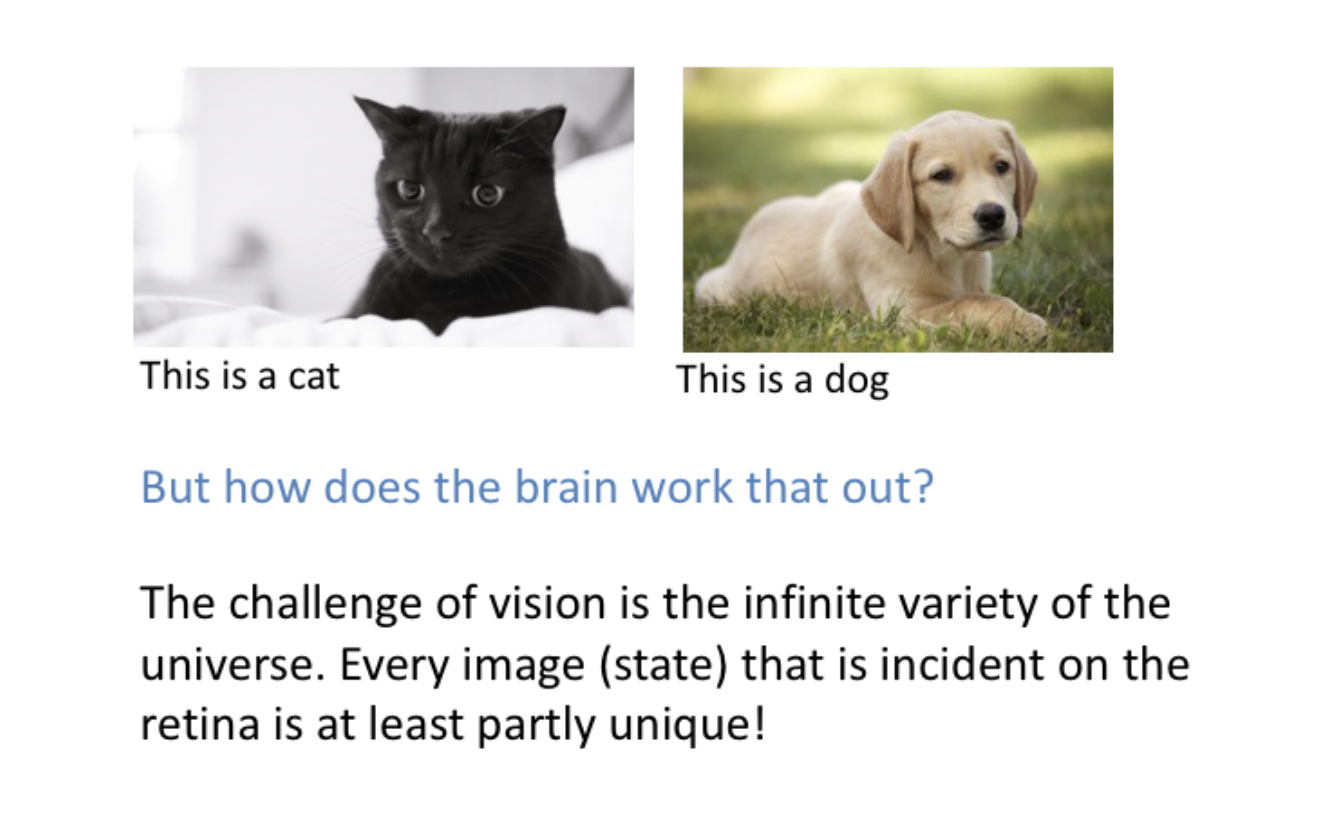

- Lecture 3: Feedforward networks and object categorisation

- Parametric models for object recognition; Critiques of pure representationalism; Perceptrons and sigmoid neurons; Depth: the multilayer perceptron; Challenges: optimisation, generalisation and overfitting

- Watch video of lecture 3

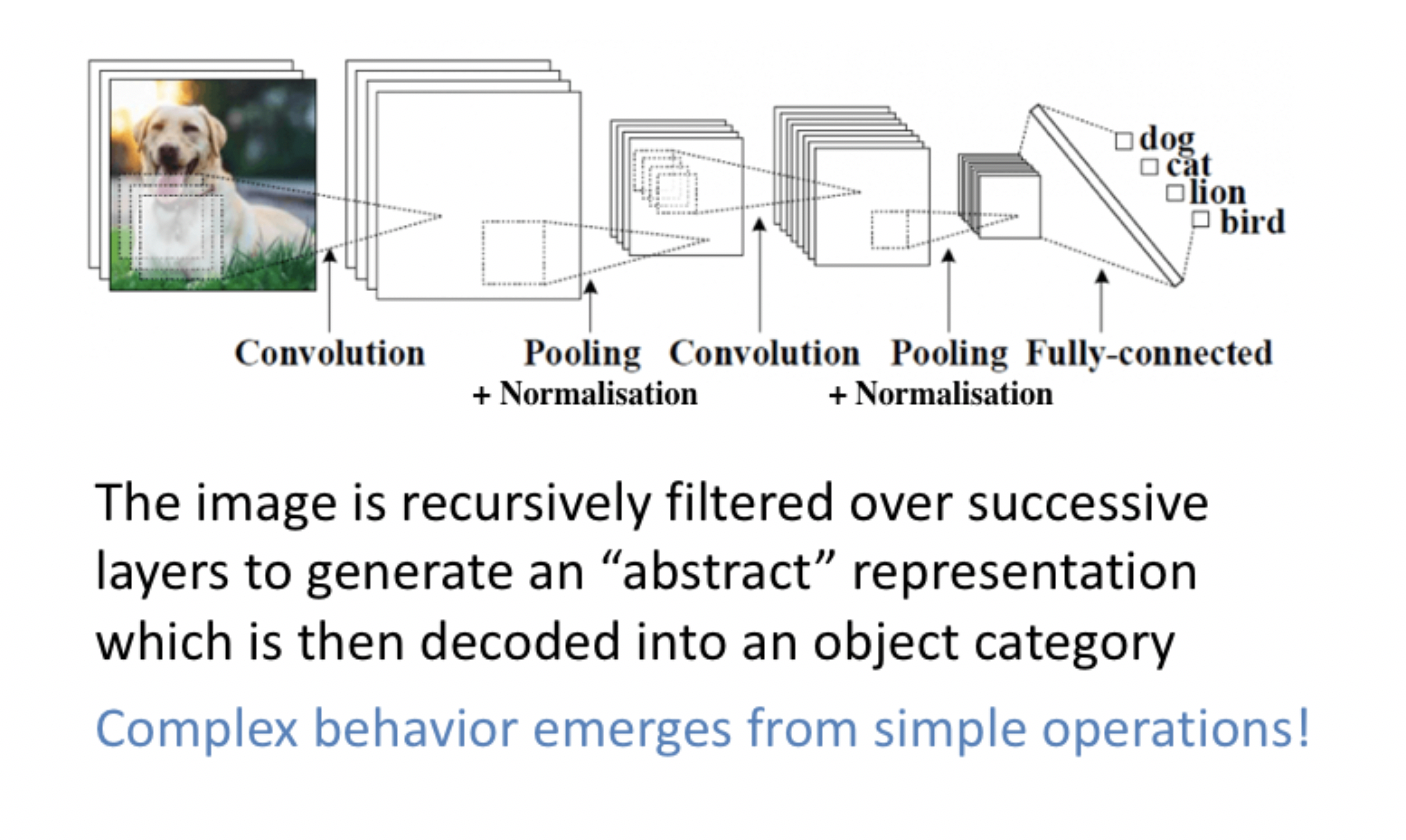

- Lecture 4: Structuring information in space and time

- Convnets and translational invariance; Convnets and the primate ventral stream; Limitations of feedforward deep networks; Hierarchies of temporal integration in the brain; Temporal integration in perceptual decision-making; Recurrent neural networks and the parietal cortex

- Watch video of lecture 4

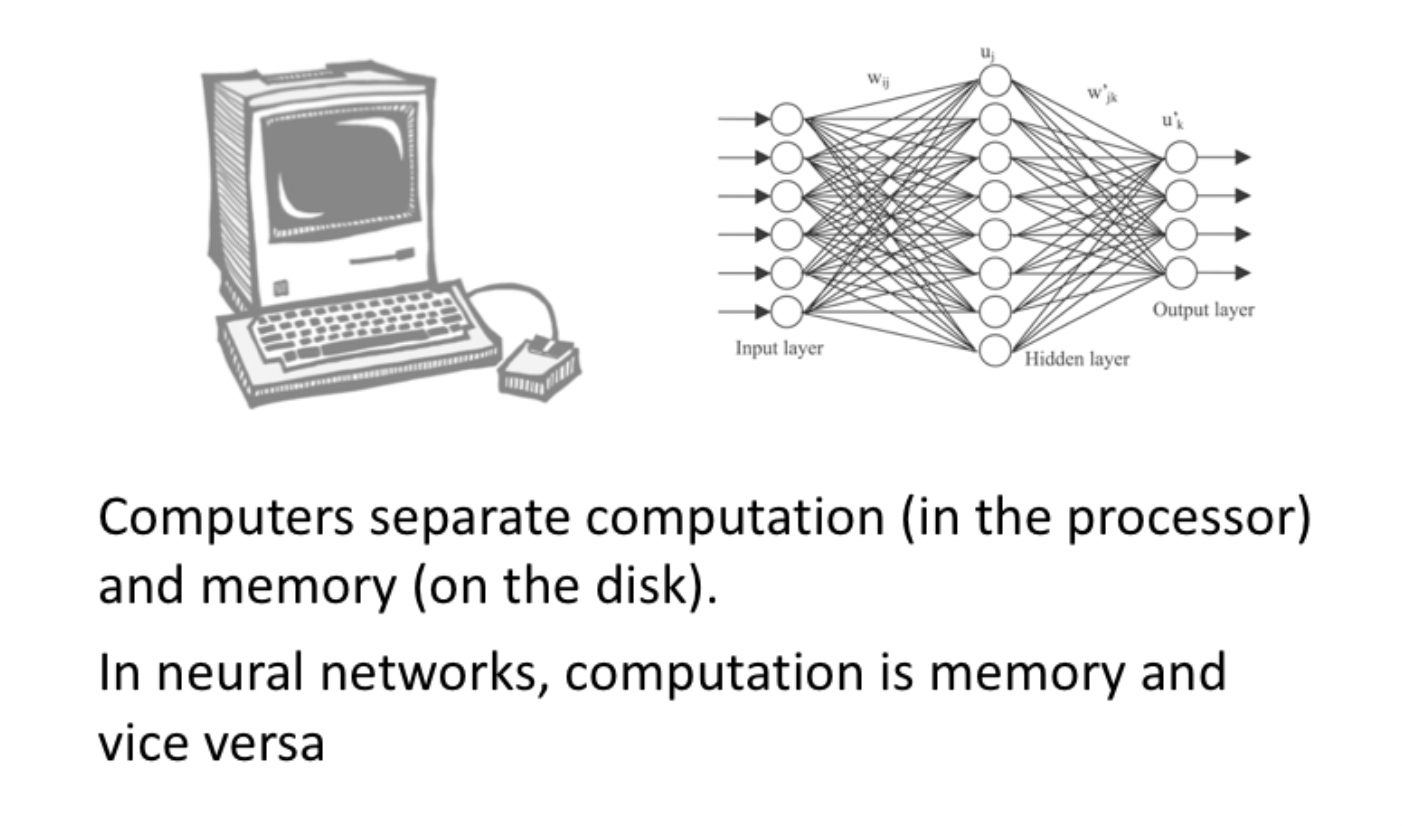

- Lecture 5: Computation and modular memory systems

- Modular memory systems; working memory gating in the PFC; LSTMs; The differentiable neural computer; The problem of continual learning

- Watch video of lecture 5

- Watch lecture 5 appendix

- Lecture 6: Complementary learning systems theory

- Dual process memory models; the hippocampus as a parametric storage devide; experience-dependent replay and consolidation; the deep Q-network; knowledge partitioning and resource allocation

- Watch video of lecture 6

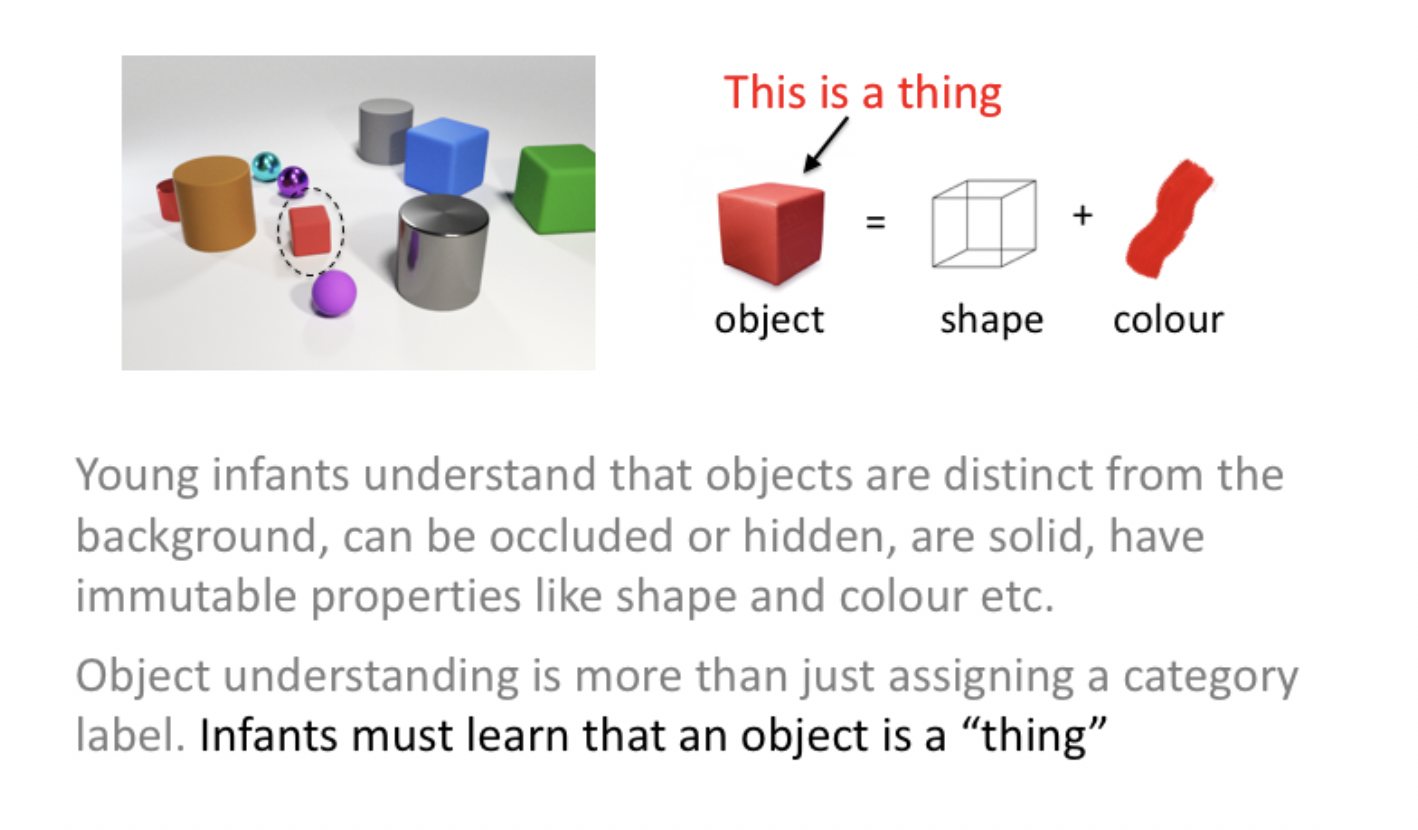

- Lecture 7: Unsupervised and generative models

- Unsupervised learning: knowing that a thing is a thing; Encoding models: Hebbian learning and sparse coding; Variational autoencoders; The Bayesian approach; Predictive coding

- Watch video of Lecture 7

- Lecture 8: Building a model of the world for planning and reasoning

- Temporal abstraction in RL and the cingulate cortex; Multiple controllers for behaviour; Cognitive maps and the hippocampus; Hierarchical planning; Grid cells and conceptual knowledge

- Watch video of Lecture 8

Reading list

Books

C. Summerfield, These Strange New Minds

M. Bennett, A Brief History of Intelligence

C. Summerfield, Natural General Intelligence

G. Linsday, Models of the Mind

T. Sejinowski, The Deep Learning Revolution

D. Lee, The Birth of Intelligence

S. Gershman, What Makes Us Smart?

Review Articles

2022

Doerig A., Sommers R., Seeliger K., Richards B., Ismael J., Lindsay G., Kording K., Konkle T., van Gerven M.A., Kriegeskorte N., Kietzmann T. The neuroconnectionist research programme. https://arxiv.org/abs/2209.03718

Zador, A., et al. Toward Next-Generation Artificial Intelligence: Catalyzing the NeuroAI Revolution. https://arxiv.org/abs/2210.08340

Vogelstein, J.T. et al. Prospective Learning: Back to the Future. https://arxiv.org/abs/2201.07372

2020

Summerfield C., Saxe A., & Nelli, S. If deep learning is the answer, what is the question? Nat. Rev. Neurosci 22 55-67 (2020).

Marcus, G. The Next Decade in AI: Four Steps Towards Robust Artificial Intelligence. https://arxiv.org/abs/2002.06177

Hadsell, R., Rao, D., Rusu, A., Pascanu, R. Embracing change: Continual Learning in Deep Networks. Trends in Cognitive Sciences, 24, p1028-1040 (2020).

Shanahan M., Crosby M., Beyret B., Cheke, L. Artificial Intelligence and the Common Sense of Animals. Trends in Cognitive Sciences 24 p862-872 (2020).

Lindsay, G. W. Convolutional neural networks as a model of the visual system: past, present, and future. J. Cogn. Neurosci. https://doi.org/10.1162/jocn_a_01544 (2020).30.

Hasson, U., Nastase, S. A. & Goldstein, A. Direct fit to nature: an evolutionary perspective on biological and artificial neural networks. Neuron 105, 416–434 (2020).33.

Drummond N, Niv Y. Curr Biol. Model-based decision making and model-free learning. Aug 3;30(15):R860-R865 (2020)

Summerfield C, Luyckx F, Sheahan H. Prog Neurobiol. Structure learning and the posterior parietal cortex. Jan;184:101717 (2020).

2019

Richards, B. A. et al. A deep learning framework for neuroscience. Nat. Neurosci.22, 1761–1770 (2019).34.

Sinz, F. H., Pitkow, X., Reimer, J., Bethge, M. & Tolias, A. S. Engineering a less artificial intelligence. Neuron 103, 967–979 (2019).23.

Kell, A. J. & McDermott, J. H. Deep neural network models of sensory systems: windows onto the role of task constraints. Curr. Opin. Neurobiol. 55, 121–132 (2019).25.

Cichy, R. M. & Kaiser, D. Deep neural networks as scientific models. Trends Cogn. Sci.23, 305–317 (2019).28.

Zador, A. M. A critique of pure learning and what artificial neural networks can learn from animal brains. Nat. Commun. 10, 3770 (2019).31.

Lillicrap, T. P. & Kording, K. P. What does it mean to understand a neural network? Preprint at arXiv https://arxiv.org/abs/1907.06374 (2019)

2018

Behrens TEJ, Muller TH, Whittington JCR, Mark S, Baram AB, Stachenfeld KL, Kurth-Nelson Z. What Is a Cognitive Map? Organizing Knowledge for Flexible Behavior. Neuron. Oct 24;100(2):490-509 (2018)

2017

van Gerven M. Computational Foundations of Natural Intelligence. Front Comput Neurosci. 11:112 (2017).

Bowers, J. S. Parallel distributed processing theory in the age of deep networks. Trends Cogn. Sci.21, 950–961 (2017).27.

Hassabis D, Kumaran D, Summerfield C, Botvinick M. Neuroscience-Inspired Artificial Intelligence. Neuron. 19;95(2):245-258 (2017).

Lake, B. M., Ullman, T. D., Tenenbaum, J. B. & Gershman, S. J. Building machines that learn and think like people. Behav. Brain Sci. 40, e253 (2017).29.

2016

Ullman, S., Assif, L., Fetaya, E. & Harari, D. Atoms of recognition in human and computer vision. Proc. Natl Acad. Sci. USA 11 3, 2744–2749 (2016).

Marblestone, A. H., Wayne, G. & Kording, K. P. Toward an integration of deep learning and neuroscience. Front. Comput. Neurosci.10, 1–61 (2016).24.

Yamins, D. L. K. & DiCarlo, J. J. Using goal-driven deep learning models to understand sensory cortex. Nat. Neurosci.19, 356–365 (2016).

Earlier

Kriegeskorte, N. Deep neural networks: a new framework for modeling biological vision and brain information processing. Annu. Rev. Vis. Sci.1, 417–446 (2015).26.

Rogers, T. T. & Mcclelland, J. L. Parallel distributed processing at 25: further explorations in the microstructure of cognition. Cogn. Sci.38, 1024–1077 (2014).32.